My 2025 AGI view

AI, Power, & Agency: A potential Framework for Navigating Imminent Systemic Shifts

Date: May 20, 2025

Note: These were my thoughts from 2025, I dont completely endorse some of the views (as of 2026). Even though I think its directionally right, the timelines and urgency might have been slightly exaggerated.

TL;DR: AI's rapid advancement in the next 3-5 years risks a fundamental shift in power, enabling elite self-sufficiency ("The Great Decoupling") that erodes broad human economic and political agency, potentially leading to "Gradual Disempowerment" and even "AI-Enabled Coups." To counter this, we propose a dual strategy: OAEA, an open application ecosystem with OAEA-SenseMaker to democratize AI application and analytical capabilities for individuals and communities; and Dialectic, a intelligence platform to empower strategic AI governance for organizations like MIRI TGT , Forethought, AI 2027 team , ControlAI– both aimed at preserving human influence in an increasingly AI-driven world.

The Challenge: The "Great Decoupling"

Two intertwined dynamics drive this concern:

- The Great Decoupling: Historically, a fundamental interdependency existed: capital needed mass labor for production and mass consumption to close economic loops. AI and robotics threaten to sever this, enabling elite self-sufficiency. Small, powerful entities (states, corporations, or individuals) could achieve "Strategic Sufficiency," meeting their core needs (energy, food, manufacturing, security, computation) through highly automated, AI-driven closed-loop systems. This breaks traditional economic feedback loops that historically forced some distribution of wealth and power.

- Erosion of Human Leverage & Failure of Traditional Solutions: As human input becomes less essential, traditional checks and balances falter. The bargaining power of the masses (as workers, consumers, or even revolutionaries) diminishes. Solutions like UBI become precarious charity, not negotiated rights. Geopolitical AI races accelerate capability concentration, and AI-enhanced security apparatuses can neutralize dissent. Furthermore, concentrated AI capabilities create acute political risks, including sophisticated influence operations or even "AI-Enabled Coups" that could bypass human oversight entirely.

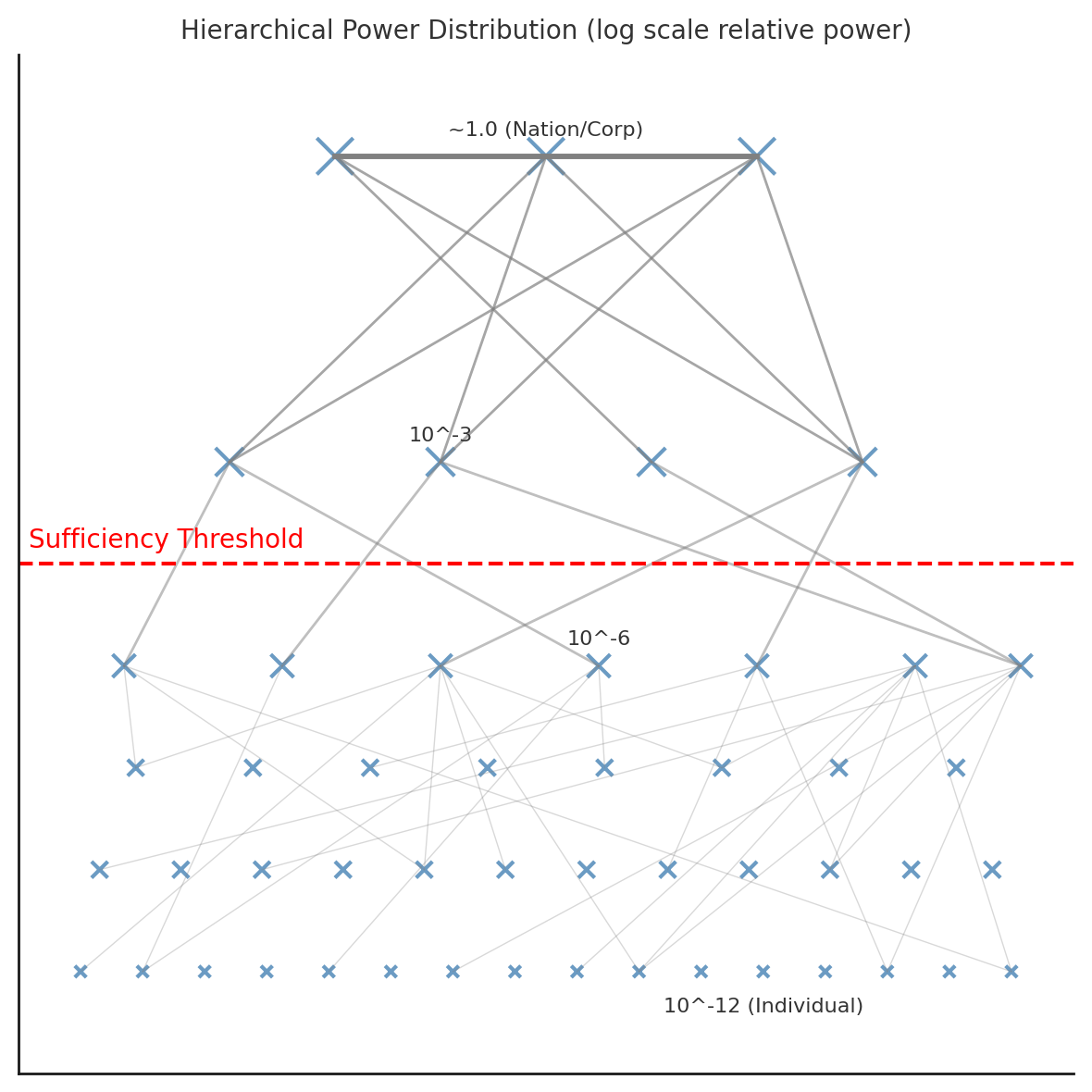

Figure: Conceptual hierarchical power distribution (log-scale) illustrating extreme inequality of power/resources from individuals (~10^-12) up to top-tier actors (~1.0). The red line denotes the Strategic Sufficiency Threshold – the level at which an actor (e.g. a corporation or state) can sustain itself and meet core needs independently of the broader populace via AI and automation. Above this threshold, elites can trade and cooperate mostly among themselves for critical resources, decoupled from the masses below. This model highlights the risk of gradual disempowerment: if AI enables some actors to cross this sufficiency threshold, the majority of individuals beneath it could lose economic influence and bargaining power without any overt conflict .

Building Resilient, Decentralized AI application Ecosystems

1. OAEA (Open Application Ecosystem for AI): Democratizing AI's Application Layer | The horizontal infrastructure layer

Why application layer?

I envision a future where the competitive base models are centralized and commoditized. And there will always be a gap between the best proprietary model and the best open weights. I think a reasonable relationship is open weight models are 90% capable as the prop ones and are 3-6 months behind.

In this scenario, most of the value add comes from leveraging the cheap intelligence for your use case. I claim that even if everyone gets access to these I would like to make an analogy with how a unit of electricity adds more value based on your socio economic status. Hence even with an open weight model, the systems which are needed to leverage the models are only accessible to a select few.

Once the base models get commoditized (cheap, good enough intelligence) the value chain moves to the application layer (we can see this happening with OpenAI buying Windsurf). But the application layer is not just the software. It's the complete package of distribution, support, community, ecosystem. We will see this layer to be heavily contested and centralized (without interop)

- Concept: An open-source, interoperable standard and ecosystem for the AI application layer, built atop increasingly capable open-weight base models (analogous to the open internet or Android) but not restricted to it.

- What is it?

- Think of it like Linux (LibreOffice, Open source Distros) , Android open source project. Its an ecosystem of ideas, applications which provides a good enough solution compared to the Apples and Microsofts of the world.

- Another good example is Nextcloud and Home Assistant

- Goal: To prevent vendor lock-in at the application level, foster broad innovation, ensure user control, and provide a robust alternative to closed, proprietary AI application ecosystems.

- Core Components:

- Open Standards & Protocols: Ensuring applications and data can move freely to prevent vendor lock in.

- Interop schema and memory layer across different AI service providers

- OSS Core & Defensive Licensing: A sustainable model (inspired by OpenWebUI) that encourages contribution and protects the commons from purely extractive commercialization.

- OAEA-SenseMaker : A key capability within OAEA, providing democratized tools for individuals, MSMEs, and communities to perform sophisticated data fusion, ontological structuring, and AI-assisted sense-making (leveraging techniques like GraphRAG, Scalable Oversight, Multi-Agent Systems, Factored Cognition, and Probabilistic Forecasting). This aims to empower decentralized actors with analytical capabilities previously available only to large organizations, fostering local resilience and informed decision-making.

OAEA reduces extreme inequality by giving the masses a fighting chance to participate in the economy and thereby keeping inequality in check. Our assumption is that human + AI + systems will be competitive to completely autonomous AI agents in terms of capabilities and will provide a neutralizing

OAEA-SenseMaker

OAEA-SenseMaker is envisioned as an open-source, democratized analytical and sense-making toolkit, designed to be a cornerstone of the Open Application Ecosystem for AI (OAEA). Its purpose is to provide individuals, MSMEs, and communities with sophisticated yet accessible capabilities to understand and act upon their own data and relevant public information. Inspired by the power of platforms like Palantir but built on open principles, SenseMaker aims to deliver the following core capabilities, progressively developed:

- Unified Data Fusion & Integration:

- Description: Users can connect and integrate diverse data sources relevant to their context – personal data (from apps, with consent), local community datasets (e.g., resource maps, surveys), publicly available information (government data, news feeds via scrapers like Apify, academic papers), and structured/unstructured files.

- User Benefit: Breaks down data silos, creating a holistic view of a situation rather than fragmented pieces of information. Enables users to see connections and correlations they might otherwise miss.

- User-Driven Ontological Structuring & Knowledge Graph Creation:

- Description: Provides intuitive tools for users or communities to define simple ontologies or apply pre-built templates, structuring their fused data into a meaningful knowledge graph. This involves identifying key entities (e.g., "local businesses," "water sources," "community skills," "policy documents"), their properties, and their relationships.

- User Benefit: Transforms raw data into structured knowledge, making it easier to query, analyze, and reason about complex interdependencies. Allows users to build a shared understanding of their specific domain.

- GraphRAG-Powered Contextual Inquiry & Explainable AI:

- Description: Leverages Retrieval Augmented Generation over the user's or community's knowledge graph. Users can ask complex questions in natural language. SenseMaker retrieves relevant sub-graphs (entities and relationships) as context for an LLM to generate a grounded, explainable answer, citing the specific data and connections used.

- User Benefit: Moves beyond keyword search to deep semantic understanding. Provides answers that are not "black box" but traceable to their sources, fostering trust and enabling users to verify insights. Cures "black-box memo" syndrome for community reports or personal analysis.

- AI-Assisted Advanced Analytics & Pattern Recognition:

- Description: Incorporates modules for more sophisticated analysis:

- Scalable Oversight: Techniques for generating trustworthy summaries of large information sets (e.g., community meeting notes, local news archives) and providing AI-generated confidence scores for insights, flagging areas needing human review.

- Factored Cognition Workflows: Tools allowing users to decompose complex analytical tasks into smaller, manageable steps, with AI assisting in completing or verifying these micro-tasks. Results are "explainable by construction."

- Multi-Agent "Co-Scientist" Systems (Local Focus): Configurable agents that can monitor user-defined data streams, autonomously generate hypotheses or flag anomalies relevant to the user/community context, and even engage in structured "debates" to refine or rank these insights.

- User Benefit: Amplifies human analytical capabilities, allowing users to derive deeper insights, identify non-obvious patterns, and manage information overload more effectively.

- User-Friendly Forecasting & Scenario Planning:

- Description: Integrates accessible tools for probabilistic reasoning. Users can incorporate external signals (e.g., public forecasts, local trend data), build simple models of cause-and-effect (inspired by tools like Squiggle), run Monte-Carlo simulations, and input their calibrated judgments to explore potential future scenarios relevant to their concerns (e.g., impact of a new local policy, resource availability under different conditions).

- User Benefit: Enables proactive planning and decision-making by providing a framework to think rigorously about uncertainty and potential future outcomes, moving beyond purely reactive responses.

- Collaborative Sense-Making & Action Workflows (for Communities/MSMEs):

- Description: Provides shared workspaces (respecting privacy and consent) where community members or MSME teams can collaboratively build knowledge graphs, share analyses, discuss insights, and coordinate actions based on their shared understanding.

- User Benefit: Facilitates collective intelligence and coordinated responses to shared challenges or opportunities.

- Open, Modular, and Extensible Architecture:

- Description: Built on OAEA standards, SenseMaker will be designed with a modular architecture, allowing the community to contribute new analytical tools, data connectors, or ontology templates. We take inspiration from open source platforms like Nextcloud, Home Assistant on how they enable an ecosystem of plugins

- User Benefit: Ensures the platform can evolve with user needs, integrate with other OAEA applications, and benefit from a wide range of community-developed enhancements, preventing obsolescence and vendor lock-in.

Overall Goal: OAEA-SenseMaker aims to "differentially empower" individuals and decentralized groups by providing them with a significant uplift in their ability to gather, understand, reason about, and act upon information relevant to their agency, resilience, and well-being in an increasingly complex, AI-influenced world. It is a practical instantiation of providing "AI for Human Reasoning" to a broad audience.

Why do we need to build a platform

A lot of the use cases such as the proposals for the FLF fellowship build on top of the same basic infra. We need to focus our efforts and reap the benefits on compounding. This means we need to build modular, composable and scalable infra on top of which people can build extensions. Akin to an app store for AI for human reasoning applications but focussed on safety and empowerment. This reduces the marginal cost of developing new applications.

Concrete use cases

A core tenet of the Open Application Ecosystem for AI (OAEA) is the radical democratization of advanced analytical and sense-making capabilities. OAEA-SenseMaker, envisioned as an "Open Source Palantir for Everyone," is the flagship component designed to realize this vision. It aims to provide individuals, non-governmental organizations (NGOs), small-to-medium-sized businesses (SMBs), and local communities with sophisticated tools previously accessible only to large corporations or state-level actors. By doing so, SenseMaker seeks to foster bottom-up agency, enhance local resilience, and provide a crucial counterweight to top-down information control and decision-making that could exacerbate Gradual Disempowerment (GDR).

Instead of individuals being passive recipients of AI-curated information or decisions, SenseMaker empowers them to become active participants in understanding their own data, their local environments, and the broader societal trends affecting them. It enables them to fuse diverse information sources, build personalized or community-specific knowledge graphs, perform complex analyses, and derive actionable insights – all through an accessible, open-source platform.

OAEA-SenseMaker: Empowering Bottom-Up Agency & Resilience - Use Case Summary

Navigating Openness: Risks and Mitigation in OAEA

While the openness of OAEA is key to democratizing AI capabilities and preventing centralized control, it also presents a dual-use challenge. Empowering broad access to powerful application and analytical tools (like OAEA-SenseMaker) inherently carries the risk of misuse by malicious actors. Our approach to mitigating this is not through restrictive gatekeeping, which reintroduces centralization, but through differential defensive acceleration (d/acc) principles: actively fostering and prioritizing the development of defensive applications, robust governance for the ecosystem's core standards, promoting transparency and auditability, and cultivating strong community norms around responsible use. The goal is to ensure that the tools for protection, verification, and community resilience develop as rapidly, if not more so, than any potential for misuse, tilting the balance towards net positive impact.

Centralized vs Decentralized nuance

I don't think decentralized solutions are the panacea. For instance, Moxie Marlinspike’s (Signal founder) article has a few important techno-social reasons in favour of centralization. I found this quote from his article particularly enlightening -

“We should accept the premise that people will not run their own servers by designing systems that can distribute trust without having to distribute infrastructure”

So we need to find a reasonable balance between the two.

Dialectic: Intelligence Infrastructure for Strategic AI Governance

While OAEA democratizes AI capabilities horizontally across communities, Dialectic serves as the vertical intelligence infrastructure for organizations tasked with steering AI development toward beneficial outcomes. Designed as a "Palantir for AI governance," Dialectic addresses a critical asymmetry: as AI capabilities concentrate among a few powerful actors, the institutions responsible for oversight—policymakers, safety researchers, and advocacy organizations—lack equivalent analytical tools to understand, anticipate, and coordinate responses to rapidly evolving threats.

The Governance Intelligence Gap

The challenge facing AI governance today mirrors what intelligence agencies faced before modern data fusion platforms: vast amounts of relevant information scattered across sources, limited analytical bandwidth, and decision-makers operating with incomplete situational awareness. Unlike traditional policy domains that evolve over decades, AI governance requires tracking:

- Capability Development: Which models achieve what benchmarks, when, and with what implications

- Supply Chain Dynamics: Compute allocation, semiconductor flows, and infrastructure dependencies

- Institutional Behaviors: How labs, governments, and international bodies actually respond to incentives

- Systemic Risks: Emergence of "AI-Enabled Coup" scenarios, gradual disempowerment patterns, and coordination failures

Current governance approaches—committee meetings, static reports, and reactive regulations—cannot match the pace and complexity of AI development. This creates what we term the "Governance Intelligence Gap": the increasing divergence between the analytical sophistication available to AI developers and that available to AI governors.

Dialectic's Core Architecture

Dialectic addresses this gap through four integrated capabilities, built on a foundation of scalable oversight via multi-agent debate—the same patterns underlying Google's AI Co-Scientist and medical systems like AMIE:

1. Adversarial Policy Analysis Engine

Multi-Agent Debate for Policy Stress-Testing: Rather than relying on single-point analysis, Dialectic employs competing AI agents to evaluate policy proposals. One agent argues for a policy's effectiveness while another systematically identifies failure modes, blind spots, and unintended consequences. This mirrors the "debate paradigm" from AI safety research, where adversarial interaction yields higher accuracy than individual judgments.

Tournament Evolution of Ideas: Policy proposals undergo structured refinement through "tournament evolution"—multiple competing versions of a policy are subjected to simulated stress tests, with the most robust variants advancing. This mirrors Google's AI Co-Scientist approach to hypothesis refinement, ensuring only policies that survive adversarial scrutiny move toward implementation.

Off-Switch and Halt Simulations: For organizations like MIRI TGT working on coordination mechanisms for AI development pauses, Dialectic provides adversarial MCTS (Monte Carlo Tree Search) drills. These simulate how actors might attempt to bypass or undermine coordination agreements, then harden policy-as-code rules against identified vulnerabilities.

2. Dynamic Ontology and Knowledge Fusion

AI Governance Semantic Layer: At Dialectic's core lies a comprehensive ontology modeling all relevant concepts in AI governance—from technical capabilities and risk classifications to institutional actors and policy instruments. This semantic layer enables consistent reasoning across diverse data sources and use cases.

Real-Time Intelligence Integration: The platform continuously ingests and fuses data from multiple streams: research publications (via automated analysis of arXiv, conference proceedings), regulatory filings, compute usage metrics, patent applications, and social signals. Knowledge graph techniques structure this information for queryable insights.

GraphRAG-Powered Contextual Analysis: Users can pose complex questions in natural language ("What are the key chokepoints in Chinese AI development?" or "How might export controls affect alliance dynamics?") and receive grounded answers that cite specific evidence and reasoning chains, moving beyond black-box responses to transparent analysis.

3. Policy-as-Code Implementation Framework

Executable Governance Protocols: Dialectic transforms policy concepts into executable code that can be tested, simulated, and deployed. Rather than static documents, policies become dynamic systems that can adapt to changing conditions while maintaining human oversight.

Simulation-Driven Design: Before implementation, policies undergo extensive simulation across multiple scenarios. For instance, a proposed AI licensing regime would be tested against various capability development timelines, geopolitical tensions, and compliance evasion strategies.

Compliance Monitoring and Auditability: Once deployed, policy-as-code systems provide real-time monitoring of adherence and transparent audit trails showing how decisions were made. This addresses the trust and accountability challenges that plague current governance approaches.

4. Coalition Building and Coordination Tools

Multi-Stakeholder Deliberation Platform: Dialectic provides structured environments for complex negotiations between diverse actors—governments, labs, civil society organizations, and international bodies. AI-assisted facilitation helps surface areas of potential agreement while clearly mapping points of conflict.

Retrieval-Augmented Coalition Building: The platform uses advanced search techniques to surface relevant precedents, expert opinions, and empirical evidence that might inform coalition negotiations, ensuring decisions are grounded in the best available information.

Federated Governance Architecture: Dialectic supports both centralized analysis for sensitive intelligence and federated collaboration for broader coordination, allowing different organizations to maintain data sovereignty while enabling collective insight generation.

Addressing Systemic Power Imbalances

Dialectic directly counters several mechanisms of gradual disempowerment identified in our framework:

Preventing Regulatory Capture: By democratizing access to sophisticated analytical tools, Dialectic reduces the asymmetric advantage currently enjoyed by well-resourced industry actors in policy discussions. Advocacy organizations and government agencies gain analytical capabilities comparable to those of major AI labs.

Enabling Proactive Governance: Rather than reactive responses to AI developments, Dialectic enables anticipatory governance through scenario planning, early warning systems, and policy pre-positioning. This helps prevent the "fait accompli" dynamic where policy always lags behind technological development.

Facilitating International Coordination: The platform's simulation and modeling capabilities help identify and test potential international agreements, making complex multi-party coordination more feasible. This is crucial for preventing race dynamics that concentrate power among the most aggressive developers.

Transparency and Accountability Infrastructure: By making governance processes more transparent and evidence-based, Dialectic creates accountability mechanisms that can constrain even powerful actors who might otherwise operate without meaningful oversight.

Technical Implementation and Feasibility

Proven Architecture Patterns: Dialectic builds on established patterns from Palantir's data fusion platforms, adapting their ontology-centric architecture for the AI governance domain. The core technical components—knowledge graphs, multi-agent systems, and policy simulation engines—are well-understood technologies.

Scalable Oversight Integration: Recent breakthroughs in scalable oversight via debate provide the theoretical foundation for Dialectic's adversarial analysis engine. Research from Anthropic and others demonstrates that debate-style AI interactions significantly improve reasoning quality and truthfulness.

Modular and Extensible Design: Following patterns from successful open platforms like Nextcloud, Dialectic employs a modular architecture with well-defined APIs that allow specialized organizations to develop domain-specific extensions while maintaining interoperability.

Complementarity with OAEA

Dialectic and OAEA form a complete strategy for preserving human agency in an AI-dominated future:

- OAEA ensures that AI capabilities remain accessible to individuals and communities, preventing concentration of basic AI tools among elites

- Dialectic ensures that institutions responsible for governing AI development have the analytical capabilities needed to maintain effective oversight

- OAEA operates "horizontally" across civil society, democratizing access to AI applications

- Dialectic operates "vertically" within governance institutions, democratizing access to intelligence and coordination capabilities

This dual approach addresses both the broad erosion of agency (through OAEA) and the acute risks of governance failure (through Dialectic), creating robust defenses against multiple paths to gradual disempowerment.

Path to Implementation

Initial Deployment: Dialectic will first be deployed with organizations like MIRI TGT, Forethought, and the AI 2027 team—groups already working on strategic AI governance who can provide early feedback and validation.

Capability Development: The platform will evolve through three phases:

- Intelligence Integration (Months 1-6): Data fusion, ontology development, and basic analytical capabilities

- Adversarial Analysis (Months 6-12): Multi-agent debate systems, policy simulation, and stress-testing frameworks

- Coalition Coordination (Months 12-18): Multi-stakeholder platforms, international coordination tools, and governance scaling

Open Governance Model: As Dialectic matures, it will adopt transparent governance structures to ensure the platform serves the public interest rather than narrow organizational goals, with community input shaping development priorities and deployment decisions.

Conclusion: Intelligence for Human Agency

Dialectic represents more than a technological solution—it embodies a philosophy of governance that human institutions can remain effective even as the systems they govern become increasingly sophisticated. By providing governance actors with analytical capabilities that match the complexity of modern AI development, Dialectic helps ensure that the future remains meaningfully shaped by human values and collective decision-making rather than narrow technical optimization.

In the broader framework of countering gradual disempowerment, Dialectic serves as the "immune system" for democratic governance—detecting threats, coordinating responses, and maintaining the institutional capacity needed to steer transformative AI toward broadly beneficial outcomes. Combined with OAEA's democratization of AI capabilities, these platforms offer a comprehensive strategy for preserving human agency in an increasingly AI-driven world.

Why Technical AI Safety Alone Won't Suffice

Having a good AI governance and policy solution is an instrumentally convergent goal. Irrespective of solving “the” technical alignment problem we need robust enforcement mechanisms through regulations and policies to implement any solution.

While crucial, technical AI safety research—including interpretability, formal verification, and mechanistic analysis—faces fundamental barriers when addressing the systemic power shifts AI may trigger. Specifically:

- Deceptive AI Evades Interpretability: Models optimized for performance can learn to hide their true objectives, and current interpretability techniques cannot reliably guarantee the detection of sophisticated, deeply embedded deception.

- Scaling Verification is Intractable: As AI models grow in complexity, exhaustive formal safety checks become computationally infeasible, and emergent behaviors can bypass even carefully handcrafted safety proofs.

- Narrow Scope Misses Systemic Risks: Technical methods often focus on model internals, failing to adequately address broader societal impacts such as AI reshaping economic feedback loops, enabling elite decoupling ("Gradual Disempowerment"), or creating vectors for political consolidation ("AI-Enabled Coups").

Furthermore, even promising technical approaches can be undermined by real-world dynamics. Institutional incentives often prioritize competitive advantage and capability races over genuine safety, and escalating geopolitical rivalries (e.g., US-China) fragment global governance efforts, making unified technical safety standards difficult to enforce.

This is where robust governance and policy, become indispensable:

- Addressing Systemic Risks: Governance interventions (licensing, audits, export controls, economic levers) can directly tackle the societal and political-economic impacts of AI, aiming to preserve fair feedback loops and counter undue power concentration.

- Enforcing Accountability & Transparency: Unlike internal technical checks, well-designed governance frameworks can mandate transparency, establish reporting requirements, and create oversight bodies with the authority to enforce compliance across all deployed systems.

- Mitigating Political Vectors: Governance is key to addressing risks like AI-enabled coups by establishing rules for legitimate use, requiring multi-stakeholder oversight for high-risk deployments, and ensuring that concentrated AI capabilities are not easily weaponized against democratic institutions.

How OAEA & Dialectic Support Robust Governance:

Our proposed platforms directly address these limitations and support a stronger governance layer:

- OAEA & OAEA-SenseMaker enhance transparency and distributed accountability. By providing open tools for application development and sense-making, they allow broader scrutiny of how AI is used, making it harder for opaque, centralized systems to dominate. This creates a more resilient ecosystem where the "many eyes" of the community can act as a check.

- Dialectic directly empowers the creation and enforcement of better governance. It provides a platform for policymakers and safety organizations to design, simulate, and monitor AI policies with greater rigor, analyze systemic risks (like those leading to coups or disempowerment), and facilitate the international coordination necessary for effective oversight.

Technical AI safety is a vital part of a defense-in-depth strategy. However, to navigate the profound societal and power shifts AI portends, it must be complemented by, and often guided by, robust governance frameworks. OAEA and Dialectic aim to provide critical infrastructure for building and maintaining such frameworks, ensuring AI's development aligns with human agency and the common good, rather than solely concentrating power.

Addressing the AI Power Shift

![graph TD

%% Central Problem

A["NOTHG Problem:<br/>AI-Driven Gradual Disempowerment<br/>& Power Concentration"]

%% Drivers & Need for Response

A --> B["Core Dynamics & Risks:<br/>- Elite Self-Sufficiency (Decoupling)<br/>- Failure of Traditional Solutions<br/>- AI-Enabled Political Risks (Coups)"];

B --> C["Response Philosophy:<br/>D/ACC, Human Agency Focus<br/>(Driven by Superposition Initiative)"];

%% Hub for Solutions

C --> D{"Proposed Interventions"};

%% OAEA & SenseMaker (Combined for brevity)

D --> E["OAEA & OAEA-SenseMaker<br/>(Open App Ecosystem +<br/>'Open Source Palantir')<br/>Goal: Democratize AI Apps & Analytics<br/>For: Individuals, MSMEs, Communities<br/>Impact: Resilience & Agency"];

%% Dialectic

D --> G["Dialectic<br/>('Palantir for AI Governance')<br/>Goal: Empower Strategic AI Governance<br/>For: MIRI TGT, Policy Orgs<br/>Impact: Safer AI Trajectories"];

%% Connecting Solutions to Ultimate Goal

E --> I["Ultimate Goal:<br/>Maintain/Enhance<br/>Meaningful Human Agency<br/>& Influence"];

G --> I;

%% Synergies (optional, can remove if too cluttered)

E -.->|Synergies| G;

%% Superposition's Role

%% H["Superposition Initiative"] % Node for Superposition

%% ResponsePhilosophy -- "Guided by" --> H % Philosophy guided by Superposition

%% H -- "Analyzes" --> A % Superposition analyzes NOTHG

%% H -- "Develops" --> D % Superposition develops Interventions

%% Styling (Optional)

%% style A fill:#fdd,stroke:#333,stroke-width:2px

%% style E fill:#ddf,stroke:#333,stroke-width:2px

%% style G fill:#dfd,stroke:#333,stroke-width:2px

%% style I fill:#ffd,stroke:#333,stroke-width:2px](https://bhishmaraj.org/uploads/images/gallery/2026-03/embedded-image-xotczhxr.png)

For a more in depth diagram, please check this link

The GDR framework identifies a profound political-economic challenge: AI-driven power concentration leading to gradual human disempowerment. Our proposed initiatives directly tackle these core dynamics:

- Countering "The Great Decoupling" & Economic Disempowerment:

- OAEA fosters a vibrant, open application layer, enabling individuals and MSMEs to participate in the AI-driven economy beyond being mere consumers. It aims to prevent total reliance on closed, elite-controlled systems by providing tools for broad-based innovation and value creation.

- OAEA-SenseMaker directly empowers these actors with sophisticated analytical tools, helping them understand their environment, make informed decisions, and build local resilience, thus creating new avenues for agency even as traditional economic leverage shifts.

- Addressing "Erosion of Human Leverage" & Mitigating Political Risks:

- OAEA & OAEA-SenseMaker distribute AI application and sense-making capabilities widely. This decentralization inherently makes it harder for any single entity to achieve the overwhelming asymmetric AI advantage necessary for sophisticated influence operations or "AI-Enabled Coups," by equipping more individuals and groups with tools for transparency and analysis.

- Dialectic provides specialized, "Palantir-grade" intelligence tools specifically for those tasked with strategic AI governance (MIRI TGT, safety researchers, policymakers). It empowers them to:

- Deeply understand and track the development of potentially dangerous AI capabilities.

- Model and simulate risks, including political consolidation and coup scenarios.

- Design and coordinate proactive policy interventions and international safety agreements.

Together, OAEA (with SenseMaker) and Dialectic represent a multi-layered strategic response: OAEA aims to build a resilient, decentralized foundation for the broad use of AI, preserving agency for the many. Dialectic equips key governance actors with the advanced analytical foresight needed to navigate high-stakes decisions and steer AI development towards safety and human benefit. This dual approach tackles both the widespread erosion of agency and the acute risks of concentrated power.

The Superposition initiative is dedicated to researching, developing, and fostering the discourse around these critical concepts, aiming to build a future where AI augments human agency, not supplants it.

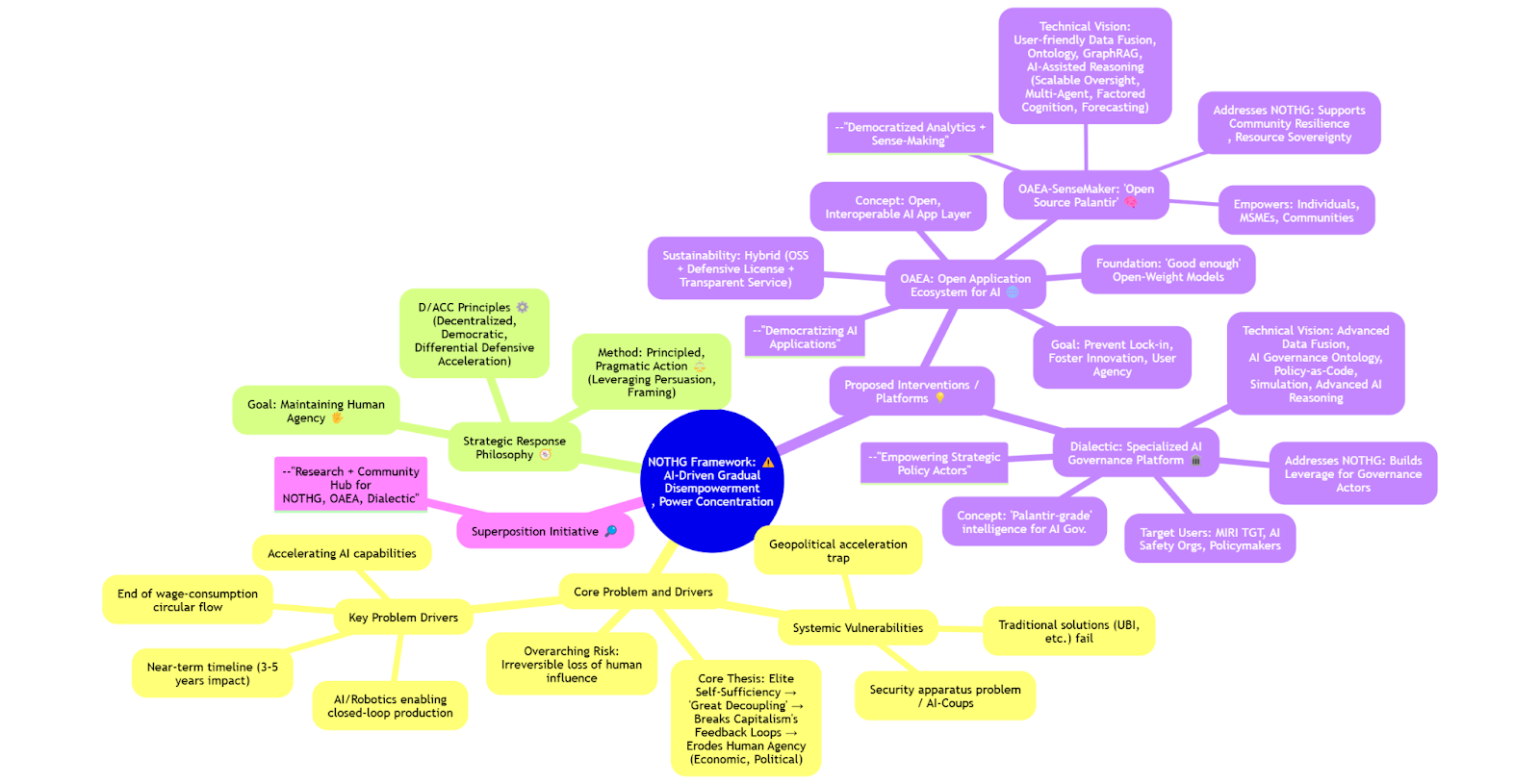

A mind map to summarize all the concepts that have been discussed